When AI Goes Negative: How Google AI Overviews and ChatGPT Handle Brand Criticism Differently

BrightEdge data shows that Google and ChatGPT surface negative brand mentions very differently across industries. Knowing where and why this happens is the next frontier of AI search optimization.

BrightEdge data reveals that when AI engines mention brands negatively, Google and ChatGPT follow fundamentally different patterns — and it varies dramatically by industry. Understanding where and why negative sentiment appears is the next frontier of AI search optimization.

As AI Overviews and ChatGPT play an increasingly central role in how consumers research products and make purchasing decisions, one question keeps surfacing in conversations with marketing leaders: if AI is going to talk about our brand, what happens when it says something we don't like?

It's the right question. AI engines are now mentioning brands by name across billions of queries — recommending, comparing, and evaluating them in real time. But that also means they can surface criticism, flag limitations, or steer users toward competitors. Until now, there hasn't been much data on how often this actually happens, what triggers it, or whether different AI engines handle it differently.

So we used BrightEdge AI Catalyst™ to find out. We analyzed prompts across Google AI Overviews and ChatGPT in three industries — Apparel, Electronics, and Education — and tracked every brand mention and its sentiment. We then compared the two engines head-to-head to understand whether they go negative on the same queries, the same brands, and for the same reasons.

The short answer: they don't.

Data Collected

Using BrightEdge AI Catalyst™, we analyzed:

| Data Point | Description |

| Brand sentiment in AI responses | Every brand mention classified as positive, neutral, or negative across both Google AI Overviews and ChatGPT |

| Primary response sentiment | The overall sentiment posture of each AI-generated response |

| Intent classification | Search intent behind each prompt (Informational, Consideration, Transactional, Post Purchase, Branded Intent) |

| Industry segmentation | Separate analysis across Apparel, Electronics, and Education verticals |

| Cross-engine comparison | Head-to-head sentiment analysis on overlapping prompts appearing in both engines |

Key Finding

Negative brand sentiment in AI is rare — but it's real, it's concentrated in predictable query patterns, and Google and ChatGPT go negative for fundamentally different reasons.

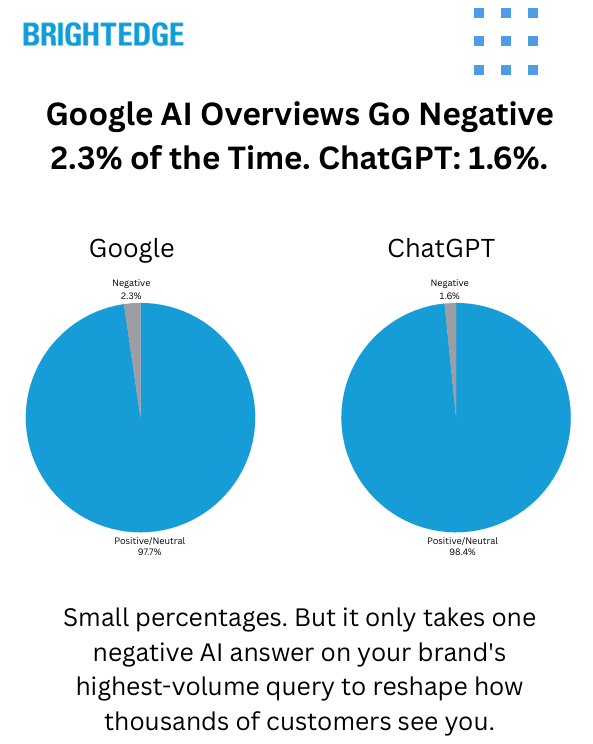

Across both engines, negative brand mentions represent a small share of total AI-generated brand references — 2.3% for Google AI Overviews and 1.6% for ChatGPT. But that small percentage is concentrated in specific, high-visibility query types. And when we compared the two engines side by side, the most striking finding wasn't how often they go negative — it was how differently they do it.

Google AI Overviews behaves like an investigative reporter, surfacing negativity around controversies, lawsuits, product recalls, and news-driven events. ChatGPT behaves like a product advisor, more likely to go negative around product limitations, compatibility issues, and evaluative "is it worth it?" queries. The same brand can be treated positively by one engine and negatively by the other — on the same query.

Overall Negative Sentiment: Small but Meaningful

Across both engines, the vast majority of brand mentions are positive or neutral. But negative sentiment, while a small share, is present and consistent:

| Engine | Positive | Neutral | Negative |

| Google AI Overviews | 49.9% | 47.7% | 2.3% |

| ChatGPT | 43.9% | 54.4% | 1.6% |

Google AI Overviews is 44% more likely than ChatGPT to mention a brand negatively. ChatGPT skews more neutral overall — it mentions more brands but takes fewer editorial positions on them, positive or negative.

Another way to frame it: Google's positive-to-negative ratio is roughly 21:1. ChatGPT's is 27:1. Both engines overwhelmingly speak positively about brands — but Google is measurably more willing to surface criticism when it does take a position.

Two Engines, Two Editorial Personalities

This is the core finding. When we isolated the prompts where only one engine went negative (the other stayed neutral or positive on the same query), clear patterns emerged in what triggers negativity for each engine.

Among identifiable negative sentiment triggers:

| Trigger Category | Share of Categorized Negatives |

| Brand Controversies & Legal Issues | 32% |

| Product Limitations & Compatibility | 21% |

| Safety & Recalls | 17% |

| Service Failures & Outages | 11% |

| Product Discontinuation | 9% |

| Price & Value Criticism | 8% |

| Competitive Comparisons | 3% |

Controversies, legal issues, and safety recalls account for the majority of identifiable negative sentiment across both engines combined. But when we split by engine, the editorial personalities diverge sharply:

Google AI Overviews skews heavily toward controversy-driven negativity. When Google goes negative and ChatGPT doesn't, the triggers are overwhelmingly news-driven — lawsuits, boycotts, data breaches, regulatory actions, product recalls. Google is 4.5x more likely than ChatGPT to surface negative brand sentiment tied to news and controversy.

ChatGPT skews toward product evaluation negativity. When ChatGPT goes negative and Google doesn't, the triggers are typically product-focused — compatibility limitations, feature shortcomings, "is it worth it?" assessments. ChatGPT is 3x more likely than Google to go negative on product evaluation queries.

In practical terms: a major retailer might face negative sentiment in Google AI Overviews because of a news story about a lawsuit — while in ChatGPT, the same retailer might face negative sentiment because a user asked whether they accept a specific payment method and the answer is no. Same brand, different engine, different reason for criticism, different risk to manage.

The Same Query, Different Verdict

We identified overlapping prompts that appeared in both engines and carried negative brand sentiment in both. Among those overlapping negative prompts, the two engines disagreed on which brand to flag 73% of the time.

This means that even when both engines recognize a query as carrying negative implications, they frequently assign that negativity to different brands within the same response. One engine might flag the retailer; the other might flag the payment provider. One might criticize the platform; the other might criticize the manufacturer.

Tracking your brand's sentiment on one AI engine gives you, at best, half the picture. The other engine may be telling a completely different story about your brand — on the same query.

Industry Breakdown: No One-Size-Fits-All

Negative sentiment rates vary significantly across the three industries we analyzed, and the relative positioning of Google vs. ChatGPT shifts depending on the vertical.

| Industry | Google AI Overviews | ChatGPT | More Negative Engine |

| Electronics | 2.5% | 1.7% | Google (1.5x) |

| Education | 2.5% | 1.4% | Google (1.8x) |

| Apparel | 0.2% | 0.6% | ChatGPT (3x) ← |

Electronics sees the highest overall negative sentiment rates, driven by product recall coverage, service outage queries, and technology controversy topics. Google leads here because there's significant news and controversy activity for Google to surface.

Education shows a similar pattern, with Google nearly twice as negative as ChatGPT. This is driven largely by institutional and political scrutiny queries — funding decisions, policy controversies, and regulatory actions affecting educational institutions.

Apparel is where the pattern flips entirely. ChatGPT is 3x more negative than Google in Apparel — not because there's more controversy, but because there's less. With fewer lawsuits and recalls for Google to report, the dominant negative triggers in Apparel are product evaluation queries: "Is this shoe good for running?" "Is this fabric durable?" These are the types of questions where ChatGPT is more willing to deliver a critical verdict.

This reversal illustrates why industry-level monitoring matters. A brand monitoring only one engine, or benchmarking against cross-industry averages, would miss the dynamics specific to their vertical.

Where Negative Sentiment Appears in the Buying Journey

The intent distribution of negative-sentiment AI responses reveals where in the customer journey brands are most exposed to AI criticism:

| Intent Type | All Prompts | Negatives (Google) | Negatives (ChatGPT) |

| Informational | 58.7% | 85.1% | 68.5% |

| Consideration | 14.1% | 1.5% | 19.4% |

| Transactional | 8.8% | 1.5% | 4.7% |

| Post Purchase | 8.8% | 0.7% | 3.7% |

| Branded Intent | 5.3% | 4.5% | 3.6% |

Google's negative sentiment is overwhelmingly concentrated in the informational phase — 85% of negative-sentiment AI Overviews appear on informational queries. This is the research and discovery stage, where users are forming impressions and evaluating options before making a decision.

ChatGPT distributes its negative sentiment more broadly. While informational queries still dominate (68.5%), ChatGPT shows meaningfully more negative sentiment in the consideration phase (19.4% vs. Google's 1.5%). This means ChatGPT is more willing to surface brand criticism closer to the point of purchase — when a user is actively evaluating options.

For brands, this distinction matters. Google's negativity hits during early research, potentially shaping initial perceptions. ChatGPT's negativity extends further into the decision-making process, where it may more directly influence purchase choices.

The Balanced Evaluator Pattern

Beyond simple negative mentions, we identified a distinct pattern where AI engines present both positive and negative brand sentiment within the same response — actively praising some brands while flagging limitations of others.

Approximately 1.4% of all prompts with brand mentions showed this mixed-sentiment pattern. These are the moments where AI is functioning as an editorial evaluator, making real-time brand-versus-brand judgments within a single answer.

| Scenario | What the AI Does |

| Compatibility queries | Praises alternative solutions while flagging limitations of the product the user asked about |

| Discontinued product queries | Speaks negatively about the discontinued brand while positively recommending current alternatives |

| Evaluative queries | Highlights strengths of category leaders while noting shortcomings of the specific brand in question |

This pattern represents AI moving beyond simple question-answering into active brand arbitration — a dynamic that didn't exist in traditional organic search results.

What This Means for Your Brand Strategy

Negative Sentiment Is Rare but Concentrated. At 1.6%–2.3% of brand mentions, negative sentiment is not the dominant AI experience. But it clusters around specific, predictable query types. Brands don't need to worry about everything, but they do need to know which queries put them at risk.

Each Engine Requires Its Own Monitoring. Google and ChatGPT go negative for different reasons, on different queries, and sometimes flag different brands on the same prompt. A brand's reputation in Google AI Overviews may look completely different from its reputation in ChatGPT. Monitoring one engine is not sufficient.

Your Industry Determines Your Risk Profile. Electronics and Education face more Google-driven negativity (controversy and news). Apparel faces more ChatGPT-driven negativity (product evaluation). The triggers, the engine, and the severity all depend on the vertical. Cross-industry benchmarks obscure more than they reveal.

The Research Phase Is Where It Matters Most. 85% of Google's negative sentiment and 68.5% of ChatGPT's appears during the informational stage. This is when opinions form. Brands that only monitor their AI presence at the transactional level are missing where the conversation is actually happening.

Sentiment Monitoring Is the Next Layer of AI Optimization. Knowing where you're cited is essential. Knowing how you're described is what comes next. As AI engines take on a larger role in shaping brand perception, sentiment tracking across engines becomes as important as citation tracking.

Technical Methodology

| Parameter | Detail |

| Data Source | BrightEdge AI Catalyst™ |

| Engines Analyzed | Google AI Overviews, ChatGPT |

| Sentiment Classification | Brand-level sentiment (positive, neutral, negative) for every brand mentioned, plus primary sentiment for overall response tone |

| Intent Classification | Informational, Consideration, Transactional, Post Purchase, Branded Intent, Not Applicable |

| Industries Covered | Apparel, Electronics, Education |

| Cross-Engine Analysis | Overlapping prompts appearing in both engines compared for sentiment alignment and brand-level agreement |

Key Takeaways

| Finding | Detail |

| Negative Sentiment Is Present but Small | Google AI Overviews: 2.3% negative. ChatGPT: 1.6%. The vast majority of AI brand mentions are positive or neutral. |

| Different Editorial Instincts | Google skews toward controversy (4.5x more likely). ChatGPT skews toward product evaluation (3x more likely). Same brand, different risks on each engine. |

| Industry Changes Everything | Electronics and Education: Google more negative. Apparel: ChatGPT 3x more negative. No single benchmark applies across verticals. |

| Informational Queries Are the Battleground | 85% of Google's negative sentiment and 68.5% of ChatGPT's appears during the research phase — before purchase decisions are made. |

| The Engines Frequently Disagree | On overlapping negative prompts, Google and ChatGPT flagged different brands 73% of the time. One engine is not enough. |

| AI Is Becoming a Brand Evaluator | ~1.4% of prompts show mixed sentiment — AI praising some brands while criticizing others in the same response. New territory for search. |

Download the Full Report

Download the full AI Search Report — When AI Goes Negative: How Google AI Overviews and ChatGPT Handle Brand Criticism Differently

Click the button above to download the full report in PDF format.

Published on February 19, 2026